Entropy: How Much Information Is in a Joke Heard or a Movie Watched for a Second Time?

The meaning of the term “information” is broad and multifaceted. In everyday language, it can refer to anything that we hear or receive. In journalism, information can be facts to report, while in intelligence, including businesses intelligence, it may be discovering patterns and gaining insights. But what does information mean in the context of information theory?

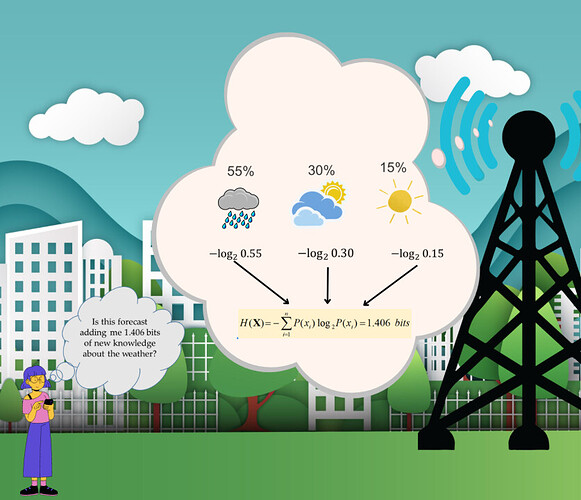

Information theory is a mathematical field that studies how to measure, store, and transmit information in a way that is efficient and effective. It was formalized by Claude Shannon, who also defined entropy as a measure of uncertainty or surprise in information theory. Messages with high entropy have high uncertainty or surprise, and therefore contain more information. Messages with low entropy have low uncertainty or surprise, and therefore contain less information.

For example, a joke that we have heard before has low entropy because we already know the punchline. On the other hand, a joke that we have never heard before has high entropy because we are uncertain about the punchline. The same is true for movies, as a movie seen before has low entropy because we are certain about what is going to happen.

This article coins the concept of “entropy thinking,” the ability to roughly estimate, gauge, or understand how much information a message contains. In an age of information overload, from social media to news headlines, we can use entropy thinking to focus on messages containing new information, instead of dwelling on those that we already know or can easily predict. This can help us make better decisions about how to allocate our time and focus, thereby saving our limited resources.

What are your thoughts on the concept of information and entropy? Share your thoughts in the comments below. Also, take five minutes to read the entire article and share my personal perspectives and insights. Feel free to repost it if you find it relevant.

LinkedIn: ![]()